Research

Everywhere you look, the quantity of data is exponentially growing, fueled by the pervasive diffusion of digitalization in modern life. Moreover, the fields of science, engineering and technology are increasingly defined by a data driven approach to research and development. High-quality and large-scale data is continuously generated at a growing rate from sensor and scanning systems, as well as from numerical simulations in a number of science and technology domains. In this context of emerging data-intensive knowledge discovery and data analysis, one of the greatest scientific and engineering challenges of the 21st century will be to understand and make effective use of this growing body of information, and efficient interactive data exploration methodologies become paramount.

Everywhere you look, the quantity of data is exponentially growing, fueled by the pervasive diffusion of digitalization in modern life. Moreover, the fields of science, engineering and technology are increasingly defined by a data driven approach to research and development. High-quality and large-scale data is continuously generated at a growing rate from sensor and scanning systems, as well as from numerical simulations in a number of science and technology domains. In this context of emerging data-intensive knowledge discovery and data analysis, one of the greatest scientific and engineering challenges of the 21st century will be to understand and make effective use of this growing body of information, and efficient interactive data exploration methodologies become paramount.

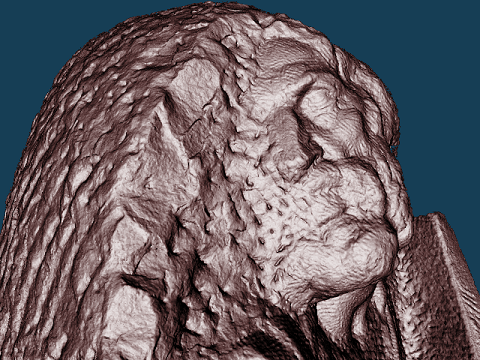

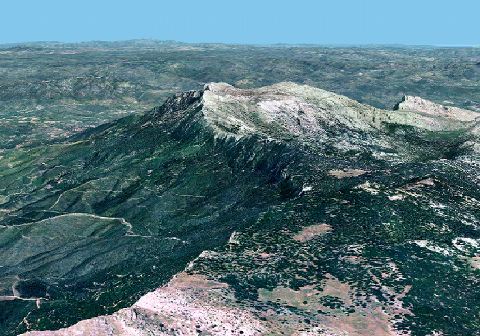

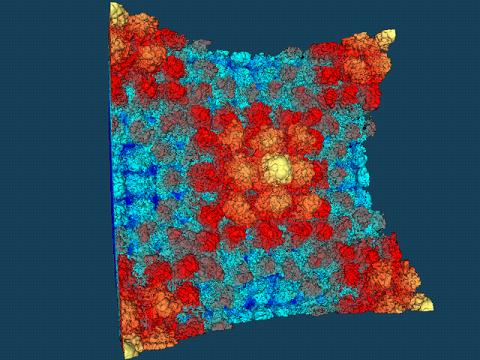

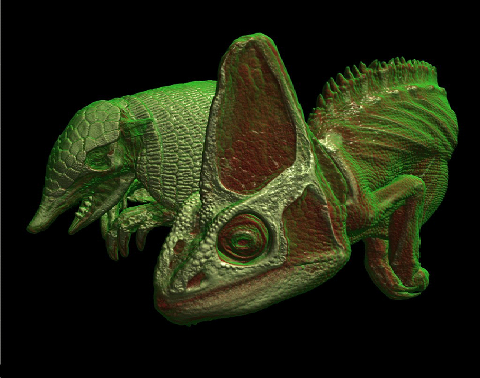

In this project, we will mainly focus on the visualization and analysis of spatial data and data embedded in 3D space which has a strong impact and usability in a wide range of application domains, including among many more: geo-visualization for natural resources management, health services or urban planning; medical imaging for diagnosis, treatment or surgery planning; virtual/augmented reality in the context of cultural heritage preservation; 3D imaging for material inspection; molecular visualization; CAD in reverse engineering or physical asset management and planning; training and simulation. Defining visual and interactive methods scalable with problem size is recognized, for instance, as one of the major challenges in many established or emerging fields, such as scientific visualization and visual analytics.

|

|

|

|

Moreover, not only applications are becoming more and more data-driven, but also the technology used to tackle these kinds of problems is rapidly witnessing a paradigm shift as far as the internal structure of  microprocessors and graphics cards are concerned. The current hardware paradigm shift to massively parallel solutions will lead to novel methods for handling massive data. However, most of current software development, be it education/research-related programming activities, or industrial application development, is still single- or few-core centric. However, with rapid advancement in many-core microprocessors and graphics cards, programming targeting multi-cores is becoming extremely important. Hence, programming skills, concepts, and experience that leverage data driven programming and many-core technologies will be in high demand in the near future.

microprocessors and graphics cards are concerned. The current hardware paradigm shift to massively parallel solutions will lead to novel methods for handling massive data. However, most of current software development, be it education/research-related programming activities, or industrial application development, is still single- or few-core centric. However, with rapid advancement in many-core microprocessors and graphics cards, programming targeting multi-cores is becoming extremely important. Hence, programming skills, concepts, and experience that leverage data driven programming and many-core technologies will be in high demand in the near future.

DIVA training program focuses on the core pipeline of visualization from massive amounts of data to visual interaction and understanding. This includes principal topics such as: data processing, feature extraction, compression, multiscale modeling, real-time rendering, display systems, user interaction, visual perception and cognition. Through collaboration between partners, as well as dedicated teaching and coaching, the fellows will gain a comprehensive view of massive data handling and visualization in science and technology.